Executive Summary

The transition to Generative AI represents a fundamental shift from asset-light software economics to asset-heavy infrastructure economics, disproportionately favoring vertically integrated incumbents.

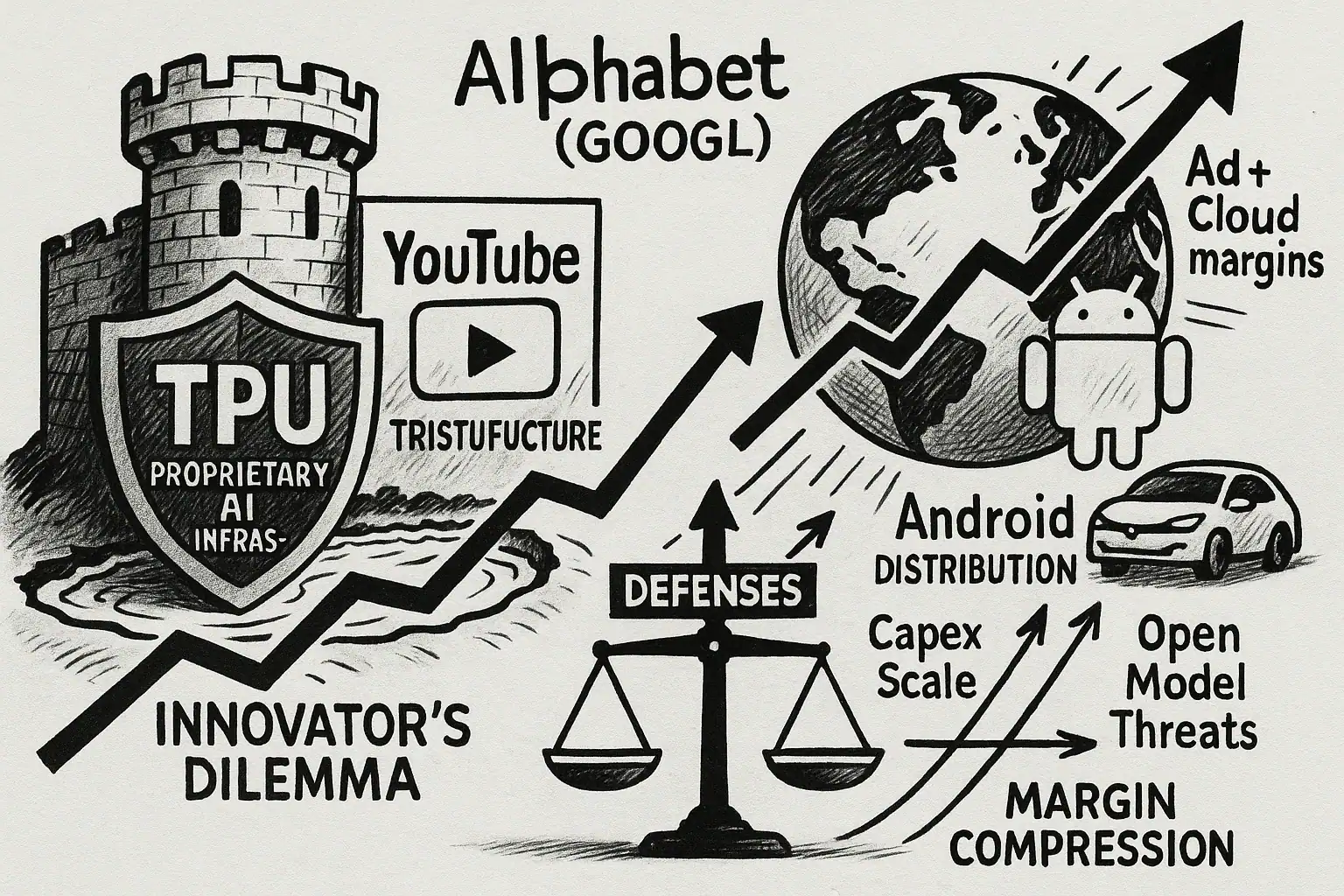

While immediate market narratives focus on the disruption of query-based search, a rigorous analysis of unit economics reveals that Alphabet possesses a durable moat through its proprietary Tensor Processing Unit silicon, sovereign data assets in YouTube and Workspace, and global distribution dominance.

Long-term value accrual is projected to shift from the commoditized model layer to the scarcity of efficient compute infrastructure and proprietary context within Google Cloud environments.

1. Macroeconomic Context: The AI Capital Expenditure Supercycle

1.1 Structural Shift to Asset-Heavy Economics

The AI Capital Expenditure Supercycle is the current phase of the global technology sector characterized by an unprecedented surge in physical infrastructure spending.

Unlike the mobile or cloud transitions which leveraged existing telecommunications backbones, the Generative AI era necessitates the wholesale reconstruction of the data center industrial base, raising barriers to entry to levels reminiscent of the utility sector.

This shift forces companies to prioritize robust infrastructure over agile, low-capital software iterations.

- Combined Big Tech capital expenditures for 2025 and 2026 are projected to exceed $405 billion Edward Conard.

- Alphabet has raised its 2025 capital budget to approximately $92 billion to secure the physical means of intelligence production Investing.com.

- Asset Fungibility is the ability of Generative AI hardware assets to be repurposed across different workloads. Unlike fiber optic cables, GPUs and TPUs can dynamically switch between training, inference, and enterprise leasing within Google Cloud, mitigating the risk of total asset obsolescence.

1.2 Energy Constraints and Physics-Based Moats

The Physics Tax is the compounding cost disadvantage faced by competitors relying on less energy-efficient hardware. In a constrained energy market, the marginal cost of intelligence is inextricably linked to power consumption, creating a scenario where energy efficiency translates directly to gross margin. This constraint makes the efficiency of the underlying infrastructure a primary determinant of long-term profitability in Generative AI.

- Google Trillium TPUs are reported to be over 67% more energy-efficient than previous generations(https://blog.google/technology/ai/difference-cpu-gpu-tpu-trillium/).

- AI-enhanced search queries are estimated to be up to 10 times more carbon-intensive than traditional queries(https://matttutt.me/what-is-the-environmental-cost-of-googles-ai-overview-searches/).

2. Infrastructure Layer: Vertical Integration and Unit Economics

2.1 The Tensor Processing Unit Cost Advantage

The Nvidia Tax is the gross margin premium captured by Nvidia on GPU sales, currently estimated in the mid-70% range. Cloud providers purchasing Nvidia hardware effectively pay a levy that compresses their own operating margins, whereas Google avoids this cost through vertical integration of its infrastructure. This allows Google Cloud to offer competitive pricing while maintaining healthier margins than competitors dependent on merchant silicon.

- Analysts estimate Google can operate TPU capabilities for approximately 20% less than entities purchasing Nvidia processing power Investopedia.

- Google Trillium TPUs achieve 4.7x peak compute performance compared to the previous v5e generation(https://blog.google/technology/ai/difference-cpu-gpu-tpu-trillium/).

2.2 System-Level Optimization

Cluster Coherence is the system-level optimization achieved when a single entity controls the entire compute stack. Google AI Hypercomputer architecture integrates TPUs with proprietary optical interconnects called Jupiter and optimized storage stacks to minimize latency bottlenecks that plague standard GPU clusters. This infrastructure coherence is critical for training massive Generative AI models efficiently.

- Multislice technology allows large AI workloads to be distributed across thousands of chips, maximizing hardware utilization rates(https://cloud.google.com/blog/products/compute/ironwood-tpus-and-new-axion-based-vms-for-your-ai-workloads).

- This integration allows Google to decouple intelligence growth from linear cost scaling within its infrastructure.

3. Data and Distribution Moat: Sovereign Territory

3.1 Multimodal Training Supremacy

Data Gravity is the attractive force exerted by massive datasets that pulls applications and services closer to the data source. YouTube serves as the world largest repository of human activity and speech, creating a high barrier to entry for competitors attempting to train video-reasoning Generative AI models. This data advantage is further secured by legal sovereignty over the platform.

- Sovereign Data Rights allow Google to update Terms of Service to permit training, whereas competitors must scrape data at legal risk or pay licensing fees(https://www.mindstick.com/news/4594/google-used-youtube-s-video-library-to-train-its-most-powerful-ai-yet-report-reveals).

- Gemini 1.5 Pro demonstrates high fidelity in video and audio reasoning derived directly from this proprietary corpus.

3.2 The Enterprise Context Window

The Context Wall is the high switching cost associated with migrating proprietary enterprise data. Google Workspace provides a captive environment where Generative AI acts as a utility within existing workflows, reducing the likelihood of enterprise churn to other infrastructure providers. This deep integration anchors users to Google Cloud services.

- Integration vs Destination distinguishes Google strategy of embedding AI into Docs and Gmail versus competitors requiring users to visit a separate chatbot interface(https://workspace.google.com/blog/ai-and-machine-learning/beyond-prompt-how-measure-value-gemini-brings-your-company).

- This grounding in private user data creates a moat against generic model providers who lack contextual access.

3.3 Mobile Distribution Dominance

The Edge-Cloud Hybrid Architecture is a cost-optimization strategy that shifts inference processing from central data centers to local consumer devices. Google control of Android allows it to deploy models like Gemini Nano directly to billions of devices, alleviating pressure on central infrastructure.

- Google can offload inference costs to user battery and hardware, shifting the marginal cost of compute away from its own P&L.

- Project Astra envisions a universal assistant capable of seeing and acting through phone sensors, a capability dependent on deep OS-level integration(https://deepmind.google/models/project-astra/).

4. The Innovator Dilemma: Search Economics

4.1 Cost Dynamics and Margin Compression

Cost Per Query or CPQ is the marginal expense incurred to process a single user search request. Generative AI queries are computationally more intensive than traditional index retrievals, creating immediate pressure on gross margins. However, robust infrastructure investments are driving these costs down over time.

- The Zero-Click Phenomenon refers to users receiving answers directly from AI without clicking links, potentially reducing traditional ad inventory(https://www.semrush.com/blog/semrush-ai-overviews-study/).

- However, data indicates that AI Overviews drive longer, more complex queries, potentially expanding the Total Addressable Market.

4.2 Evolution of Monetization

Commission-Based Monetization is the economic model where the search engine captures value by fulfilling a transaction rather than just redirecting traffic. As Generative AI agents perform more complex tasks like booking travel, they theoretically justify a higher cost-per-acquisition.

- Google has integrated sponsored suggestions directly into AI Overviews, creating new inventory that blends organic answers with commercial intent(https://searchengineland.com/google-ads-ai-overviews-spotted-455889).

- High-intent commercial queries still favor visual shopping ads, allowing Google to subsidize expensive informational queries.

5. Commoditization of the Model Layer

5.1 Commoditize Your Complement Strategy

Commoditize Your Complement is a strategic maneuver where a company seeks to reduce the cost of a complementary product to zero to increase the value of its core product. Google publishes research to commoditize the model layer, driving value back to its proprietary infrastructure and data assets. This strategy protects Google Cloud revenues by making the underlying hardware the scarcest resource.

- If high-quality intelligence becomes abundant via open source, the economic value shifts to the owners of scarce complements: proprietary data and distribution.

- This dynamic suggests pure-play model labs face a precarious economic future compared to integrated hyperscalers(https://news.ycombinator.com/item?id=44035397).

5.2 Context as the Technical Moat

Long Context Windows are technical capabilities allowing models to process vast amounts of information in a single prompt. This reduces the need for complex Retrieval Augmented Generation pipelines and leverages Google Cloud infrastructure to handle massive data loads.

- Gemini 1.5 Pro supports a 2-million-token context window Google Gemini API.

- This capability locks developers into Google architecture, as switching to a smaller context model would require rebuilding application logic.

6. Financial Analysis and Valuation

6.1 The Disruption Discount

The Disruption Discount is the market valuation gap reflecting investor fear of search cannibalization. Alphabet trades at a lower multiple compared to Microsoft, despite having lower internal compute costs due to its TPU infrastructure. This discount may narrow as the efficiency of Google Generative AI implementation becomes apparent.

- Analysts note that Microsoft AI revenue is heavily taxed by the cost of running OpenAI models on Nvidia hardware(https://www.morganstanley.com/im/publication/insights/articles/article_thebeatseptember2025.pdf).

- As investors shift focus to Return on Invested Capital, Google lower capital intensity per unit of compute may drive a re-rating.

6.2 Cloud Growth Trajectories

Revenue Run Rate is the annualized revenue extrapolated from the most recent quarter. Google Cloud growth indicates that the vertical integration strategy is validating itself in the enterprise market.

- Google Cloud reported $15.2 billion in Q3 2025 revenue, a 34% year-over-year increase(https://www.crn.com/news/cloud/2025/microsoft-vs-aws-vs-google-cloud-q3-2025-earnings-face-off).

- This growth rate exceeds that of AWS (20%) and Microsoft Azure (28%).

7. Risks and Regulatory Factors

7.1 Antitrust Vulnerability

Antitrust Breakup Risk is the threat of regulatory intervention forcing the divestiture of core assets. A forced separation of Chrome or Android would shatter the distribution moat and drastically increase Traffic Acquisition Costs. This remains the most significant threat to Google dominance in Generative AI distribution.

7.2 Hallucination Liability

Hallucination Liability is the legal and reputational risk arising from AI models generating factually incorrect information. This poses a specific threat to the trust-based search business model, where accuracy is paramount compared to creative applications.

- Benchmarks indicate varying hallucination rates among frontier models, with Google Gemini showing competitive but not flawless performance Lakera.

Leave a Reply